Why AI Agents Are Brutally Exposing the Failure of Your Chatbot Strategy

The Number Your CTO Doesn’t Want to See

Here’s a number that should make every CXO uncomfortable: 72% of customer service chatbot interactions require human escalation before a query is actually resolved (Salesforce, State of Service Report 2024). In Bangladesh, where over 120 million mobile internet users now expect seamless digital service across everything from bKash transactions to Daraz returns, that failure rate isn’t just a statistic. It’s a brand erosion timeline. The era of ‘I’m sorry, I didn’t understand that’ is over. Yet most companies across Dhaka’s corporate towers are still celebrating chatbot deployments as digital milestones. What they’ve actually built are glorified FAQ pages with a conversational wrapper. AI agents, systems that can reason, decide, and act on behalf of a customer in real time, represent a categorically different technology. And the brands that miss this distinction are already paying a price they can’t see on any dashboard.

The Chatbot Illusion: Bangladesh’s Most Expensive Digital Mistake

Let’s be precise about what a chatbot is. A rule-based chatbot is a decision tree dressed in natural language. It matches inputs to predefined patterns and returns scripted outputs. It cannot look up your account. It cannot initiate a refund. It cannot check your delivery status in real time and reroute a package. It can only answer questions it was already programmed to answer, in exactly the format it was programmed to answer them. According to Gartner’s 2024 analysis, fewer than 20% of enterprise chatbots can handle more than basic FAQ-level queries without failing or escalating.

The Bangladesh picture is sharper and more uncomfortable. LightCastle Partners’ 2024 Bangladesh Digital Business Report found that only 19% of the country’s top enterprises report their chatbot resolving queries without human handoff. Yet the investment in chatbot infrastructure continues. Companies are paying for two customer service operations simultaneously: the bot that fails and the human team that catches those failures. The operational math is brutal.

But the financial waste isn’t even the real problem. The real problem is the brand signal it sends. A customer who hits a chatbot dead end at 11 PM, when no human agent is available, doesn’t file that experience under ‘technology limitation.’ They file it under ‘this company doesn’t care.’ Forrester’s 2024 Customer Experience Index found that 60% of customers who fail to get resolution through automated channels switch to a competitor within 30 days. In a market where consumer trust is still being earned, and social proof on Facebook groups can make or break a brand overnight, that churn trajectory is devastating.

There’s also a language problem that’s uniquely ours. Standard NLP models are trained predominantly on English text. BASIS’s 2024 Tech Ecosystem Report estimates that accuracy drops 40-60% when these systems process Banglish, the code-mixed Bengali-English that dominates how Bangladeshis actually type online. So not only are we deploying tools that can’t act, we’re deploying tools that often can’t even accurately understand what our customers are saying.

What AI Agents Actually Are – And Why the Difference Is Not Subtle

An AI agent isn’t just a smarter chatbot. The architectural difference is fundamental. A chatbot is reactive and stateless: it waits for input, matches a pattern, returns an output, forgets everything. An AI agent is proactive, stateful, and tool-enabled: it maintains context across a session, it can call external APIs, query databases, execute transactions, and chain multiple actions together to reach a goal on the user’s behalf.

Think of it this way. A chatbot can tell you that your order is delayed. An AI agent can detect the delay automatically, identify alternative delivery options, notify you proactively, offer a discount code for the inconvenience, and update your delivery address if you ask, all within a single conversation, without a human in the loop. That’s not a marginal improvement. That’s a different category of service.

The academic framing here matters. Researchers at MIT’s Computer Science and AI Laboratory describe the agent architecture as a ‘perceive-reason-act’ loop, distinct from the pattern-matching pipeline of conventional chatbots. The agent perceives context (order history, account status, past interactions), reasons about the best course of action (using a large language model as the reasoning engine), and then acts through connected tools (APIs, databases, payment gateways). This loop can iterate in real time until the customer’s goal is actually achieved.

For brand strategy, this distinction matters enormously. Brand loyalty is built on one thing more than any other: the feeling that a company actually solved your problem. McKinsey’s 2024 Next in Personalization report found that companies that successfully deliver real-time problem resolution see customer satisfaction scores 20-35% higher than those relying on static self-service tools. Klarna’s data from its February 2024 AI agent rollout is the clearest proof point available: their agent handled 2.3 million customer conversations in the first month, the equivalent of 700 full-time agents, while cutting average resolution time from 11 minutes to 2 minutes, with customer satisfaction scores on par with human agents.

But here’s the thing most strategy presentations miss: this only works because Klarna had clean, connected data infrastructure before deployment. The agent’s capability is entirely dependent on the quality of the systems it can access. An agent connected to a fragmented CRM with inconsistent customer data is no better than the chatbot it replaces, and potentially worse, because it will confidently act on incorrect information.

“An AI agent connected to broken data infrastructure will confidently do the wrong thing. That’s more dangerous than a chatbot that fails silently.”

The Causal Chain: How Chatbot Failure Damages Brands Slowly, Then All At Once

The damage model isn’t sudden. It compounds. A company deploys a chatbot to reduce support costs. The bot handles 15-20% of query types adequately. Complex queries account disputes, delivery exceptions, service failures, hit scripted dead ends. The customer is forced to repeat their entire issue when transferred to a human agent, if one is even available. The experience registers as the brand being ‘digital but not helpful.’ The customer doesn’t cancel immediately. They just become slightly less loyal. They mention it to a colleague. They leave a frustrated comment in a Facebook group. Fourteen months later, acquisition costs have risen 18% and the retention team can’t pinpoint why. This is the brand tax that chatbot-era thinking is charging every year.

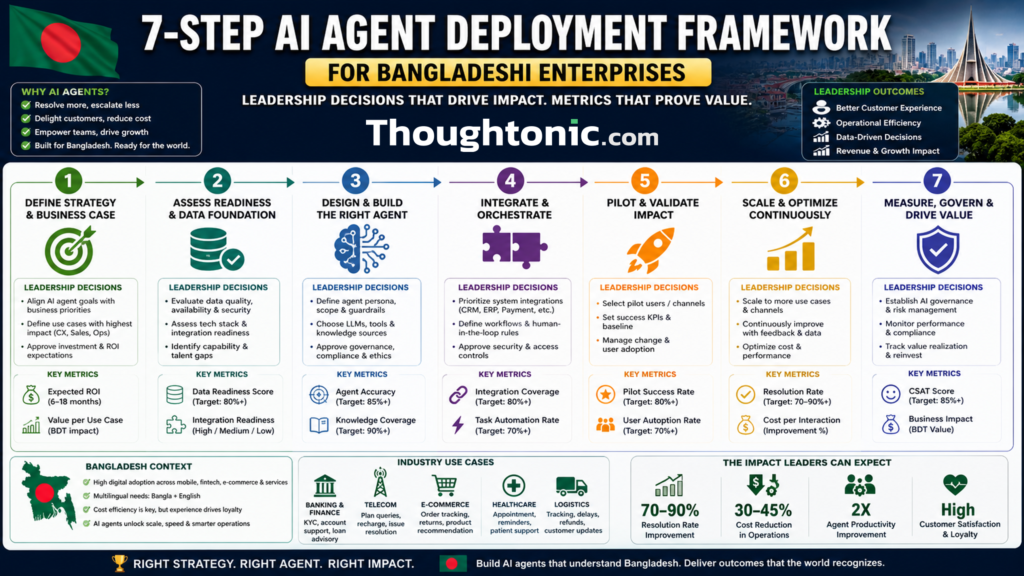

A Practical Framework for Transitioning to AI Agents in Bangladesh

Making this shift isn’t a single technology decision. It’s a sequenced set of leadership commitments. Here’s how I’d structure the transition for a mid-to-large Bangladeshi enterprise:

| Step | Leadership Decision | Trade-off | Success Metric | Effort |

| 1. Audit | Admit your chatbot’s real FCR rate | Exposes past investment underperformance | First Contact Resolution % | Low |

| 2. Scope | Legal sign-off on agent action boundaries | Wider scope = more value, more risk | Query type coverage ratio | Medium |

| 3. Integrate | Prioritize CRM/OMS API connectivity | Integration complexity vs. capability | System connectivity index | High |

| 4. Localize | Invest in Banglish NLP training data | Build vs. buy NLP capability | Language accuracy score | High |

| 5. Govern | Define accountability and override protocols | Governance overhead vs. agent autonomy | Inappropriate action rate | Medium |

| 6. Pilot | Choose one low-risk, high-volume use case | Speed vs. safety in deployment | Pilot CSAT vs. baseline | Medium |

| 7. Scale | Fund expansion on evidence, not confidence | Growth rate vs. quality consistency | Customer Effort Score trend | High |

A few of these steps deserve extra attention in the Bangladesh context. Step 4 – localization – is where most global AI vendor solutions fall short for us. The difference between a system that understands ‘amar parcel kothay?’ and one that doesn’t isn’t a nice-to-have. It’s the entire user experience for a significant portion of your customer base. Step 5 – governance – is the step most companies skip in their rush to deploy. In a market with evolving regulations around digital financial services and consumer data, an AI agent that processes refunds without an audit trail is a compliance liability, not just a customer service tool.

The most common mistake I see: skipping the audit phase entirely because leadership already ‘knows’ the chatbot is performing well. It almost never is. Pull the escalation logs. Categorize the failure types. That data will tell you exactly where an agent would create the most value, and which use cases to avoid until your data infrastructure is ready.

Case Studies: What Execution Actually Looks Like

Global: Klarna’s AI Agent Deployment (2024)

Klarna is the clearest global benchmark available because their numbers are public and audited. In February 2024, the Swedish fintech company announced that their AI agent, built on OpenAI’s technology, had handled 2.3 million customer service conversations in its first month alone. That’s equivalent to the work of 700 full-time agents. Average resolution time dropped from 11 minutes to under 2 minutes. Customer satisfaction scores held at parity with human agents. Repeat inquiry rates, a proxy for resolution quality – fell 25%.

What made this work wasn’t the AI model itself. It was Klarna’s pre-existing investment in structured customer data, clean API architecture, and well-defined agent action boundaries. The agent knew exactly what it was allowed to do (process refunds up to a certain threshold, update payment plans, resolve disputes below a defined value) and what required human escalation. That governance clarity is what prevented costly errors.

The limitation here is significant for the Bangladesh market: Klarna operates in a mature regulatory environment with standardized financial data formats. Most Bangladeshi companies even large ones, are still reconciling data across legacy systems. The Klarna blueprint is the right destination. The path to it requires infrastructure work that can’t be skipped.

South Asia: The bKash Customer Experience Evolution

bKash is Bangladesh’s most instructive local case, not because they’ve deployed full AI agents yet, but because their trajectory shows what’s possible and what the constraints are. With over 65 million registered users, bKash faces a customer service challenge that is genuinely massive in scale. Their progression from IVR-based support to increasingly intelligent digital assistance has been deliberate and measured.

In my analysis, what bKash has done well is sequence their capability building carefully. They’ve prioritized data integrity and back-end system connectivity before deploying conversational interfaces. Industry reports from BRAC Institute’s governance research suggest that companies that invest in back-end data architecture before AI deployment see 2-3x higher customer satisfaction outcomes than those that bolt AI onto existing fragmented systems.

The constraint bKash and every fintech in Bangladesh faces is Bangladesh Bank’s regulatory framework around autonomous digital transactions. An AI agent that processes MFS transactions without proper audit trails and human oversight checkpoints creates regulatory exposure that no brand can afford. This is why governance, step 5 in the framework isn’t optional. It’s the condition under which the whole system is allowed to operate.

Action Plans: For Organizations and Professionals

For Organizations: Five Resisted Actions That Actually Move the Needle

- Audit your chatbot’s actual First Contact Resolution rate, not the internal estimate, the real logged data. (Effort: Low. Timeline: 2 weeks. Budget: Internal.)

- Commission a Banglish NLP accuracy test on your current system. Most vendors will not volunteer this data. Ask for it. (Effort: Low. Timeline: 1 month. Budget: Under BDT 2 lakh.)

- Map every backend system that would need API access for an agent to be genuinely useful. This is your integration gap analysis. (Effort: Medium. Timeline: 6-8 weeks. Budget: Internal + IT.)

- Define, in writing, the action boundaries for any AI agent deployment before a single line of code is written. What can it do? What requires human approval? Who is accountable when it’s wrong? (Effort: Medium. Timeline: 4 weeks. Budget: Legal + leadership time.)

- Run a pilot in one contained, high-volume use case, order status tracking is the safest starting point for most e-commerce and logistics players. Measure it for 90 days before scaling. (Effort: High. Timeline: 3-6 months. Budget: BDT 15-50 lakh depending on vendor vs. in-house build.)

For Professionals: Five Uncomfortable Skills to Build Now

- Prompt engineering for agentic systems: This is different from writing chatbot scripts. Agents need goal-oriented instructions, not conversation flows. It’s uncomfortable because most marketers haven’t engaged with how LLMs reason.

- Reading AI failure logs: Knowing how to diagnose why an agent took a wrong action is increasingly a core CX skill. It’s uncomfortable because it requires technical fluency most senior marketers have deliberately avoided.

- API integration basics: You don’t need to write code, but you need to understand what an API connection enables and what breaks it. Uncomfortable because it sits outside the traditional marketing toolkit.

- AI governance and accountability frameworks: Who owns the decision when an agent gives a customer wrong information? Understanding this at a policy level is now a board-level concern with marketing implications.

- Customer Effort Score measurement: CES – not CSAT, is the metric that most accurately captures whether an AI agent is genuinely helping. Most teams still measure satisfaction. They should measure effort.

The Risks Nobody Puts in the Pitch Deck

Let me be direct about where these strategies typically fail in Bangladesh. Most AI agent implementations will not fail because of the technology. They’ll fail because the underlying data infrastructure isn’t ready. An agent connected to a CRM where 30% of records are duplicate or incomplete will act on bad information, confidently. That’s worse than a chatbot that fails with an apology.

There’s also an ethical risk that gets minimal airtime: accountability opacity. When an AI agent processes a refund or modifies an account, who is legally responsible if it’s wrong? Bangladesh Bank’s cybersecurity guidelines for digital financial services require audit trails and accountability structures that most AI agent deployments don’t natively provide. This isn’t a theoretical risk. It’s a compliance exposure.

And here’s the contrarian scenario worth sitting with: a retail brand in Dhaka with a disciplined, well-trained WhatsApp-based human support team will outperform a poorly integrated AI agent on every meaningful customer satisfaction metric. The goal isn’t to automate for automation’s sake. The goal is to actually resolve the customer’s problem. Sometimes, especially during early infrastructure-building phases, more human attention, better structured, is the right answer.

Key Takeaways

- 72% of chatbot interactions require human escalation – that number is your starting benchmark, not a ceiling to accept. (Salesforce, 2024)

- AI agents differ from chatbots architecturally: they perceive, reason, and act across connected systems. The capability gap is not incremental.

- Klarna’s AI agent handled 2.3 million conversations in one month, equivalent to 700 agents with 2-minute average resolution. Clean data infrastructure made it possible.

- In Bangladesh, only 19% of large enterprises report chatbot resolution without human handoff. The investment in chatbots is ongoing while the performance case isn’t there.

- Banglish NLP accuracy is 40-60% lower in standard models, localisation isn’t optional for companies serving Bangladeshi customers.

- Governance and accountability frameworks must be defined before deployment. In regulated industries, this is a compliance requirement, not a best practice.

- Audit your actual First Contact Resolution rate before building any business case for AI agents. The real number is almost always worse than the internal estimate.

- A well-integrated human support team will beat a poorly integrated AI agent every time. Infrastructure readiness is a prerequisite, not a parallel workstream.

Read more articles:

- The Costly AI Strategy Gap: Why Your Team Is Playing, Not Executing

- The Costly Truth About Minimalist Bangladesh Design Strategy

- The Costly Visual Search Blind Spot That Is Making Bangladesh Brands Invisible

- Quantum Marketing: How 2030’s Technologies Will Shatter Bangladesh’s Status Quo

- Digital Literacy & Brand Purpose: How Education Drives Loyalty in Emerging Markets

Bibliography

Global Sources

- Salesforce State of Service Report 2024 — Salesforce Research, 2024

- Gartner Conversational AI and Chatbot Analysis 2024 — Gartner, 2024

- Klarna AI Press Release: One Month In — Klarna, February 2024

- McKinsey Next in Personalization 2024 — McKinsey Global Institute, 2024

- Forrester Customer Experience Index 2024 — Forrester Research, 2024

- IBM Institute for Business Value: AI and Customer Service 2024 — IBM, 2024

- MIT CSAIL: Autonomous AI Agent Architecture — MIT, 2023-2024

- Harvard Business Review: AI in Customer Service — HBR, 2023

- PwC Global Consumer Insights Survey 2024 — PwC, 2024

- Deloitte Digital Consumer Trends 2024 — Deloitte, 2024

Bangladesh and South Asia Specific Sources

- LightCastle Partners: Bangladesh Digital Business Report 2024 — LightCastle Partners, 2024

- Bangladesh Telecommunication Regulatory Commission (BTRC): Internet Subscribers Report 2024 — BTRC, 2024

- BASIS Bangladesh Tech Ecosystem Report 2024 — Bangladesh Association of Software and Information Services, 2024

- e-Commerce Association of Bangladesh (e-CAB) Consumer Survey 2024 — e-CAB, 2024

- BRAC Institute of Governance and Development: Digital Service Delivery Report 2024 — BIGD, 2024

- Bangladesh Bank: Digital Financial Services and Cybersecurity Guidelines 2024 — Bangladesh Bank, 2024