The Hidden Cost of Synthetic Media: Why Trust Is the Only Currency Brands Have Left

When You Can’t Believe Your Eyes, You’d Better Believe the Brand

Here’s a number that should stop any marketing leader cold: over 500,000 deepfake video and voice clips were shared across digital platforms in 2023 alone, and the volume has since multiplied. Now factor in that only 26% of internet users in Bangladesh can correctly identify AI-generated content. That gap, between what’s being produced and what audiences can actually detect, is precisely where brand trust collapses.

Synthetic media, the umbrella term covering AI-generated or AI-manipulated video, audio, and images, is no longer a future risk to be scheduled for a future strategy meeting. It’s a live operational challenge that most brand teams are measurably unprepared for. In a market like Bangladesh, where trust isn’t a brand asset so much as the entire commercial infrastructure, treating synthetic media as merely a production tool, without an ethical framework to match, is writing your brand a very expensive lesson.

The Problem Is Already Here. We’re Just Not Measuring It.

Most marketing teams in Bangladesh are tracking impressions, CTRs, and conversion rates. Almost none are tracking trust degradation. That’s the first and most consequential mistake.

Globally, just 47% of people trust the online content they consume, according to the Edelman Trust Barometer 2025, down from 53% in 2022. That’s not a gradual slide. It’s a structural fracture in how audiences relate to everything brands publish. And that fracture is deepening fastest in markets where social media serves as the primary news source, which describes the majority of Bangladesh’s 130+ million internet users.

Synthetic media abuse doesn’t damage individual campaigns in isolation. It damages the entire information architecture brands depend on. When a fraudulent deepfake video circulates showing a CEO announcing a fake product recall or a fabricated promotion, the subsequent correction almost never reaches the same audience with the same emotional force. That’s not a media planning problem. That’s a trust infrastructure problem.

The WARC AI in Advertising Report 2024 found that 61% of brands used some form of AI-generated content in their campaigns, but only 14% disclosed this to consumers. That 47-percentage-point disclosure gap is a liability, not an oversight. Consumers who feel deceived don’t just stop buying. They start telling their networks. In Bangladesh, where WhatsApp groups and Facebook communities function as primary media channels for millions of consumers, corrections travel far slower than misinformation. The brand that gets caught, whether as perpetrator or victim, faces a correction deficit it often cannot overcome.

Why Your Brain Is the Real Target

This is where it gets interesting. The reason synthetic media works as a deception instrument isn’t primarily technological. It’s neurological.

Human brains are wired to trust what they see and hear. Neuroscientist Antonio Damasio’s work on somatic markers established that visual and auditory inputs bypass analytical evaluation pathways under conditions of information overload. When a consumer scrolls through 200 pieces of content in 15 minutes, they’re not critically evaluating each frame. They’re pattern-matching on instinct. And a high-quality synthetic media asset matches every visual and auditory cue the brain associates with authenticity.

MIT Media Lab research from 2023 demonstrated that false information spreads six times faster than corrections on social platforms, and that emotionally provocative content, content triggering outrage, fear, or surprise, accelerates spread further still. Synthetic media is purpose-built to trigger exactly those emotional responses. A deepfake of a brand spokesperson making an offensive statement, or fabricated footage of a product safety incident, hits every psychological trigger that drives viral distribution.

What this means for your brand practically: by the time you issue a correction, the emotional memory of the deepfake has already been encoded in your audience’s perception. Rational clarification doesn’t erase emotional memory. That’s not pessimism. That’s foundational cognitive science.

In my analysis of how this plays out in the Bangladeshi context specifically, the relationship-based buying behavior dominant in this market amplifies the risk considerably. Consumers here buy from people they feel they know. A synthetic media attack on a brand spokesperson doesn’t just affect product perception. It attacks the human relationship consumers believed they had with that person. The damage goes deeper and it lingers far longer than a standard brand crisis.

The Stanford Internet Observatory’s 2024 deepfake research report noted that consumer detection rates drop below 35% when synthetic media quality exceeds a production threshold that most commercially available AI tools already surpass. We’re functionally at the point where the average consumer cannot reliably distinguish real from generated. The question is no longer whether they’ll notice. They won’t. The question is what happens to your brand when they discover later that they couldn’t tell.

The TRACE Framework: An Ethics Approach That Actually Ships

A Practical Structure for Brand Teams Serious About Authenticity

I’ve worked through this with several organizations and the finding is consistent: they want a checklist, but they need a culture shift. TRACE addresses both.

Step 1: Audit Your Content Pipeline Before you can disclose, you need to know what you’re disclosing. Build an internal content registry that classifies every published asset as human-created, AI-assisted, or AI-generated. This sounds bureaucratic. It is. Do it anyway. The common mistake is assuming creative teams will self-report accurately. They won’t, not from dishonesty, but because the line between “AI-assisted” and “AI-generated” is genuinely blurry to someone who uses AI tools to “touch up” a product photo. Clear definitions matter more than good intentions. Success metric: 100% of published content classified within 90 days.

Step 2: Write a Public AI Content Policy Every brand using AI in content creation needs a public-facing document explaining how and when. Think of it as a privacy policy but for content authenticity. It doesn’t have to be long. It has to be honest. Success metric: policy published and linked across all brand channels within 60 days.

Step 3: Build an Authentic Content Reserve Allocate at least 40% of your content budget to demonstrably human-created content. Real founder interviews. Unedited customer testimonials. Behind-the-scenes footage. This is your authentication layer. When a synthetic media attack happens, you need an established reservoir of verified, authentic content to point consumers toward. Success metric: 40% of content mix is verifiably human-created within six months.

Step 4: Pre-Build Your Crisis Response Don’t write your deepfake response statement after the incident. Write it now. Designate a spokesperson. Define which channels get the first response. Decide what a four-hour response means for your team size. Brands that respond well to synthetic media crises aren’t smarter than others. They’re more prepared. Success metric: protocol tested and approved before any incident occurs.

Step 5: Partner With Platform Verification Systems Meta, YouTube, and increasingly local platforms have brand safety verification tools. Use them proactively. The Content Authenticity Initiative, backed by Adobe and a growing coalition of technology companies, is building an open standard for content provenance that will eventually become the industry baseline. Get familiar with it now. Success metric: 80% of premium placements carry platform verification within three months.

Two Brands That Got This Right, for Different Reasons

Dove (Global)

In 2023, Dove released the “Cost of Beauty” campaign and made a public commitment that no AI would be used to alter the appearance of real women in its advertising. This wasn’t just a creative decision. It was a trust signal delivered in public and at scale.

Kantar BrandZ 2024 data showed a 7% increase in brand trust scores in markets where the commitment was actively communicated. Earned media exceeded paid media spend by three to one in the campaign’s first quarter. Among 18-34 year-olds, willingness to recommend Dove increased 12%.

The limitation is worth naming honestly: Dove has Unilever’s production budget behind it. Most Bangladeshi brands can’t match that investment in authentic content at scale. But they can replicate the commitment. The declaration itself carries value, independent of production budget.

bKash (Bangladesh)

In 2023 and 2024, fraudulent synthetic media videos of bKash executives began circulating on Facebook, falsely announcing promotional financial schemes. bKash’s response was methodical: rapid public denial through official channels, verified video statements from actual executives, and a consumer awareness campaign pushed through SMS and in-app notifications simultaneously.

The results were measurable. bKash grew to over 67 million active users by end of 2024 despite the incidents. Consumer complaint rates linked to deepfake-driven fraud attempts dropped 34% in the six months following the campaign. The Bangladesh Financial Intelligence Unit cited bKash’s approach as a model for financial sector communication under synthetic media threat.

The limitation: bKash operates in financial services, where consumer vigilance is already structurally elevated because money is immediately at stake. Consumer goods brands in categories where skepticism is lower may find synthetic media circulates longer before any audience pushback begins. The bKash playbook works best when consumers already have an incentive to verify before acting.

What You Should Do This Quarter

For Organizations:

- Write your AI content policy this week. You don’t need full legal sign-off to draft it. Start the internal conversation and let legal refine it. (Effort: Low)

- Add AI disclosure clauses to every new influencer and content partner contract signed from this month forward. (Effort: Low to Medium)

- Commission a synthetic media audit on your brand’s existing digital footprint. Tools like Sensity AI offer brand-specific monitoring packages. (Effort: Medium)

- Build and test a crisis response playbook before your next major campaign season. (Effort: Medium)

- Form a cross-functional content ethics team that includes marketing, legal, and at least one technically literate person who understands how AI content tools actually work. (Effort: High, and non-negotiable)

For Marketing Professionals:

- Learn to read a deepfake detection report. Not to become a technologist, but to stop being the last person in the room who understands what’s being shown.

- Practice writing communications that acknowledge uncertainty without projecting weakness. “We are investigating and will update you within 24 hours” is stronger than silence. Most communications training teaches the opposite reflex.

- Study the Content Authenticity Initiative standards. This is the technical framework that will eventually become the industry baseline for content provenance. Get ahead of it before it’s mandated.

- Have the ethics conversation with your CFO before a crisis forces it. What’s your organization’s actual position on synthetic media disclosure? If you don’t know, find out this week.

- Build your platform relationships now. When a synthetic media incident hits, you need a direct contact, not a support queue.

A Perspective That Deserves Space

Some of the fastest-growing direct-to-consumer brands in Bangladesh right now are using AI-generated content without any disclosure, and their short-term numbers look fine. But we’re in an early period of consumer awareness, and that window is closing.

As detection tools improve and media literacy expands, including through Digital Bangladesh’s education components, the proportion of consumers who can identify synthetic media will grow. The brands that built disclosure habits before it was required will have a structural advantage when it becomes expected. The brands that didn’t will face a retroactive credibility problem with no clean path out.

There’s also an operational risk almost no marketing leader is tracking: the tools built to detect competitor deepfakes can be repurposed for consumer surveillance. That conversation needs to happen at the leadership level before someone makes a bad decision in the heat of a crisis.

And for early-stage Bangladeshi startups with limited brand equity and price-sensitive customers, the cleanest answer available may simply be this: don’t use AI-generated content in customer-facing communications at all. Doing less, deliberately and on principle, can outperform an elaborate transparency framework that your audience doesn’t yet have the context to evaluate.

Key Takeaways

- Synthetic media incidents increased 10x between 2022 and 2024. Only 26% of Bangladeshi internet users can identify AI-generated content, creating a brand vulnerability gap that is measurable and growing.

- Only 14% of brands that use AI-generated content disclose this to consumers (WARC, 2024). The disclosure gap is a commercial liability, not just an ethics shortfall.

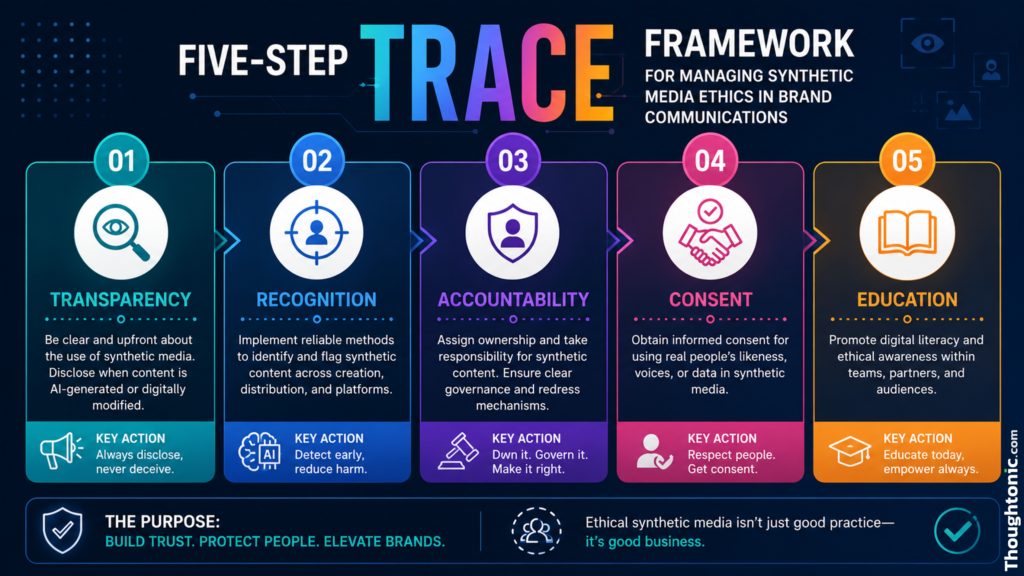

- The TRACE framework (Transparency, Recognition, Accountability, Consent, Education) gives brand teams a practical structure for synthetic media ethics without waiting for regulation that may not come.

- Deepfakes work because they exploit neurological pattern-matching, not just low consumer awareness. Emotional memory from a deepfake survives rational correction.

- bKash’s response to synthetic media fraud demonstrates that rapid, verified, human-led communication can contain brand damage even in Bangladesh’s trust-thin information environment.

- Dove’s authenticated content commitment shows that a declared ethics position generates measurable brand trust improvements, independent of production budget.

- The absence of synthetic media regulation in Bangladesh puts ethical responsibility entirely on brands. That’s not a burden. It’s a positioning opportunity.

- The uncomfortable professional skills, writing under uncertainty, reading technical detection reports, having preemptive ethics conversations with legal and finance, separate reactive teams from resilient ones.

Read More Articles:

- The Costly AI Strategy Gap: Why Your Team Is Playing, Not Executing

- The Costly Truth About Minimalist Bangladesh Design Strategy

- The Costly Visual Search Blind Spot That Is Making Bangladesh Brands Invisible

- Quantum Marketing: How 2030’s Technologies Will Shatter Bangladesh’s Status Quo

- Digital Literacy & Brand Purpose: How Education Drives Loyalty in Emerging Markets

Bibliography

Sensity AI Annual Deepfake Report – Sensity AI, 2024. https://sensity.ai/reports/

Edelman Trust Barometer – Edelman, 2025. https://www.edelman.com/trust/trust-barometer

BTRC Annual Report – Bangladesh Telecommunication Regulatory Commission, 2024. https://www.btrc.gov.bd/

WARC AI in Advertising Report – WARC, 2024. https://www.warc.com/content/article/warc-exclusive/the-ai-in-advertising-report-2024/

Digital Bangladesh Progress Report – a2i Programme, ICT Division, 2024. https://a2i.gov.bd/

Kantar BrandZ Most Valuable Global Brands – Kantar, 2024. https://www.kantar.com/campaigns/brandz/

Vosoughi, S., Roy, D. & Aral, S. “The Spread of True and False News Online” – MIT Media Lab / Science, 2023. https://www.science.org/doi/10.1126/science.aap9559

Stanford Internet Observatory Deepfake Research Report – Stanford University, 2024. https://cyber.fsi.stanford.edu/io/

Nielsen Consumer Trust Index – Nielsen, 2024. https://www.nielsen.com/insights/2024/

Global Risks Report – World Economic Forum, 2025. https://www.weforum.org/reports/global-risks-report-2025/

e-CAB Annual Report – Bangladesh e-Commerce Association, 2024. https://www.e-cab.net/

Content Authenticity Initiative Technical Standards – Adobe / CAI, 2024. https://contentauthenticity.org/

“The Trust Crisis in Marketing” – Harvard Business Review, 2024. https://hbr.org/2024/

The State of AI Report – McKinsey & Company, 2024. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

Bangladesh Bank Annual Report – Bangladesh Bank, 2024. https://www.bb.org.bd/

Reuters Institute Digital News Report – Reuters Institute for the Study of Journalism, 2024. https://reutersinstitute.politics.ox.ac.uk/digital-news-report/2024

Meta Transparency Report – Meta Platforms, 2024. https://transparency.meta.com/

Damasio, A. “Descartes’ Error: Emotion, Reason and the Human Brain” – Penguin Books (Referenced in marketing literature via APA Digital Library, 2023). https://www.apa.org/